Topic identification, topic modeling

IV161 NLP in Practice Course, Course Guarantee: Aleš Horák

Prepared by: Zuzana Nevěřilová, Adam Rambousek, Jirka Materna

State of the Art

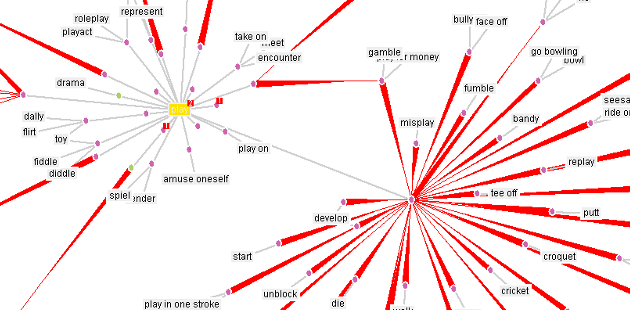

Topic modeling is a statistical approach for discovering abstract topics hidden in text documents. A document usually consists of multiple topics with different weights. Each topic can be described by typical words belonging to the topic. Classic topic modeling methods are Latent Semantic Analysis and Latent Dirichlet Allocation. The latest methods lean on text embedding approaches.

References

- Weijie Xu, Xiaoyu Jiang, Srinivasan Sengamedu Hanumantha Rao, Francis Iannacci, and Jinjin Zhao. 2023. vONTSS: vMF based semi-supervised neural topic modeling with optimal transport. In Findings of the Association for Computational Linguistics: ACL 2023, pages 4433–4457, Toronto, Canada. Association for Computational Linguistics. https://aclanthology.org/2023.findings-acl.271/

- George, Lijimol, and P. Sumathy. "An integrated clustering and BERT framework for improved topic modeling." International Journal of Information Technology 15.4 (2023): 2187-2195.

- David M. Blei, Andrew Y. Ng, and Michael I. Jordan. Latent Dirichlet Allocation. Journal of Machine Learning Research, 3:993 – 1022, 2003.

Practical Session

In this session, we will use Gensim to model latent topics of news groups e-mails. We will focus on Latent Semantic Analysis and Latent Dirichlet Allocation models.

- Create a text file named

iv161-tp-IIII.txtwhereIIIIis your university ID. - Use this Google Colab Notebook.

- Follow instructions in the Colab.

- Save the required output into your

iv161-tp-IIII.txtand submit it to the [en/NlpInPracticeCourse homework vault] (Odevzdavarna).