Named Entity Recognition

IV161 NLP in Practice Course, Course Guarantee: Aleš Horák

Prepared by: Zuzana Nevěřilová, Aleš Horák

State of the Art

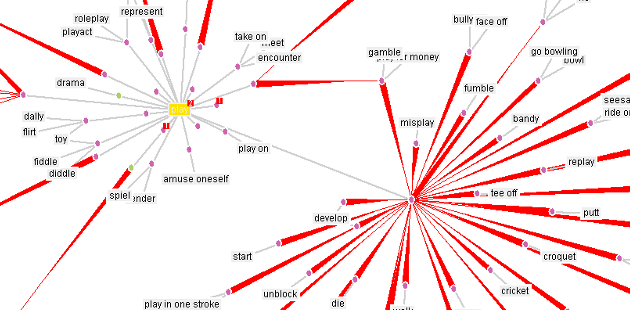

NER aims to recognize and classify names of people, locations, organizations, products, artworks, sometimes dates, money, measurements (numbers with units), law or patent numbers, etc. Known issues are the ambiguity of words (e.g., May can be a month, a verb, or a name), the ambiguity of classes (e.g., HMS Queen Elisabeth can be a ship), and the inherent incompleteness of lists of NEs.

Named entity recognition (NER) is used mainly in information extraction (IE), but it can significantly improve other NLP tasks, such as syntactic parsing.

Example from IE

| In 2003, Hannibal Lecter (as portrayed by Hopkins) was chosen by the American Film Institute as the number one movie villain. |

Hannibal Lecter <-> Hopkins

Example concerning syntactic parsing

| Wish You Were Here is the ninth studio album by the English progressive rock group Pink Floyd. |

vs.

| Wish_You_Were_Here is the ninth studio album by the English progressive rock group Pink Floyd. |

References

- Jacob Devlin, Ming-Wei Chang, Kenton Lee, and Kristina Toutanova. BERT: pre-training of deep bidirectional transformers for language understanding, 2019. https://arxiv.org/abs/1810.04805

- Afshin Rahimi, Yuan Li, and Trevor Cohn. 2019. Massively Multilingual Transfer for NER. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, pages 151–164, Florence, Italy. Association for Computational Linguistics. https://aclanthology.org/P19-1015/

- Zihan Liu, Yan Xu, Tiezheng Yu, Wenliang Dai, Ziwei Ji, Samuel Cahyawijaya, Andrea Madotto, Pascale Fung: CrossNER: Evaluating Cross-Domain Named Entity Recognition. 2020. 2012.04373. https://arxiv.org/abs/2012.04373

Practical Session

Multilingual Named Entity Recognition

In this workshop, we train a NER model for any languages WikiAnn supports. We work with the huggingface library, its BERT model for multilingual token classification, and the WikiAnn training data.

- Create

<YOUR_FILE>, a text file namediv161-IIII-03.txtwhere IIII is your university ID number. - Open Google Colab Python notebook

- Follow the instructions in the notebook. There are four obligatory tasks. Write down your answers to

<YOUR_FILE>. - Submit to the homework vault (Odevzdavarna).