Generative Language Models

IV161 NLP in Practice Course, Course Guarantee: Aleš Horák

Prepared by: Aleš Horák

State of the Art

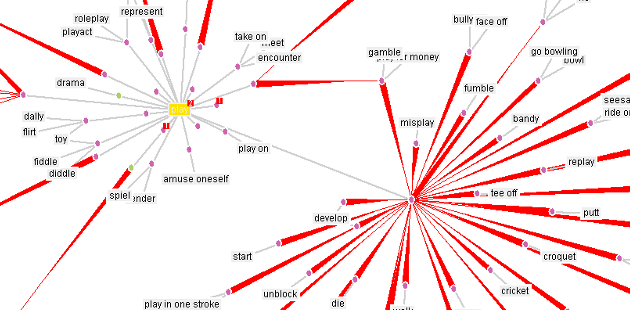

Generating text essentially involves predicting the next word in a sequence. Starting with an initial prompt (a seed), the model predicts or generates the subsequent word that aligns with the context. State-of-the-art models, like those based on the Generative Pre-trained Transformer (GPT) architecture, are composed of multiple attention layers, enabling them to handle complex cognitive tasks.

Assistant models, such as ChatGPT, are built on this foundation, making them adept at understanding and generating human-like responses. A key aspect of using these models effectively is *prompt engineering*, which involves crafting well-structured inputs to guide the model's behavior and improve the quality of its output.

References

- Vaswani, A. et al. (2017): Attention Is All You Need. ArXiv preprint: https://arxiv.org/abs/1706.03762

- Radford, A. et al. (2018): Improving Language Understanding by Generative Pre-Training. OpenAI: https://cdn.openai.com/research-covers/language-unsupervised/language_understanding_paper.pdf

- Navigli, R., Conia, S., & Ross, B. (2023). Biases in Large Language Models: Origins, Inventory, and Discussion. J. Data and Information Quality, 15(2). https://doi.org/10.1145/3597307

Practical Session

We will be working with the Google Colab Notebook. The task consists of experiments with prompting an assistant model for solving the sentiment analysis task and math problem tasks.